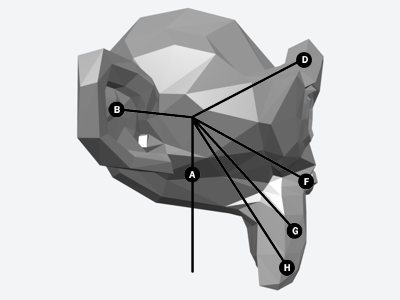

Since a few people have emailed me asking about this, here is some more info on the control system I am developing for Panda Puppet and how it works, or at least how I am currently trying to make it work.

Panda Puppet is shaping up as a Python plug-in for Blender. At this stage I am not trying to make it do anything that isn't already possible in Blender's Game Engine, I just want to streamline the way characters can be set-up and controlled in real-time. The core of Blender's GE is Logic Bricks (sometimes called Logic Blocks) which are used to set up and control interactions between different game elements like characters, props, etc.

This is what

Blender's Game Logic Panel looks like:

Once again I won't go in to all the technical nitty-gritty of Logic Bricks here, but if you want to learn more just click on the link above.

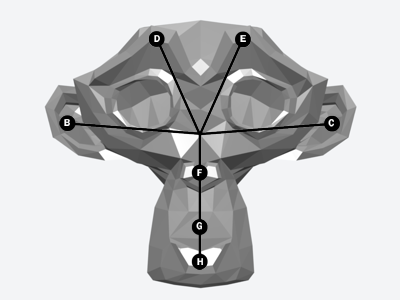

Panda Puppet's control system is a simplified interface for quickly setting up Logic Bricks in way that's ideal for controlling digital characters. I am still in the early stages of programming this so all of it may change, but this is how I am designing the control system right now:

Click on the diagram to enlarge it

The sensor type is the type of device used by the puppeteer to control on an on screen character or object. This can be almost any kind of device imaginable, including a joystick, keyboard, gamepad,

dataglove or even a

Wiimote. I want Panda Puppet to be as flexible and "controller agnostic" as possible. Rather than forcing a puppeteer to adapt to a specific type of input device, I want a control system can be customized to the needs and preferences of a puppeteer and I want different puppeteers performing in a scene together to use different types of controls if they want.

Sensor InputSensor inputs are specific types of inputs from an input device. For example, the press of a button on a keyboard or the x-y axis movement of a joystick.

Sensor ActionSensor Actions are control types that govern a character's action and determine what happens when a puppeteer uses a specific sensor input. There are three different types of Sensor Actions - direct controls, pose controls and emotion controls.

Direct ControlUsing direct control a puppeteer has direct control over the movement of a specific part of a digital character. For example, The x-y "walking" movement of a character can be assigned to the x-y axis movement of a joystick so that when the joystick moves in a specific direction the character walks in a corresponding direction.

Pose ControlPose Control is used to assign specific poses or movements to a specific sensor input. For example, an elaborate shriek or a comedic double-take could be triggered by a joystick button.

Emotion State ControlEmotion State Control influences the movements and poses of a character according to the character's emotion state. An emotion state can be assigned to a specific sensor input and triggered when that control is activated and will influence the character as long as the input control remains active. If the emotion state "happy" is assigned to a joystick's trigger as long as the trigger is pressed the character will remain "happy".

Typically, there are six primary emotional states - anger, disgust, fear, joy, sadness and surprise. Just as primary colours can be modified and mixed to create every other imaginable colour, primary emotional states can also be mixed to create every emotional state imaginable. For example, Anger + Disgust = Rage. Emotion state combinations can be triggered by activating a combination of sensor inputs (when primary emotion states are assigned to individual sensor inputs) or mixed emotion states can be grouped and triggered by a single sensor input.

If anyone out there has thoughts, ideas and/or suggestions about all of this please feel to drop me a line at

puppetvision {at} gmail dot com.